Welcome to the Atlas Systems Blog

Driving innovation and success through thoughtful solutions and expert insights.

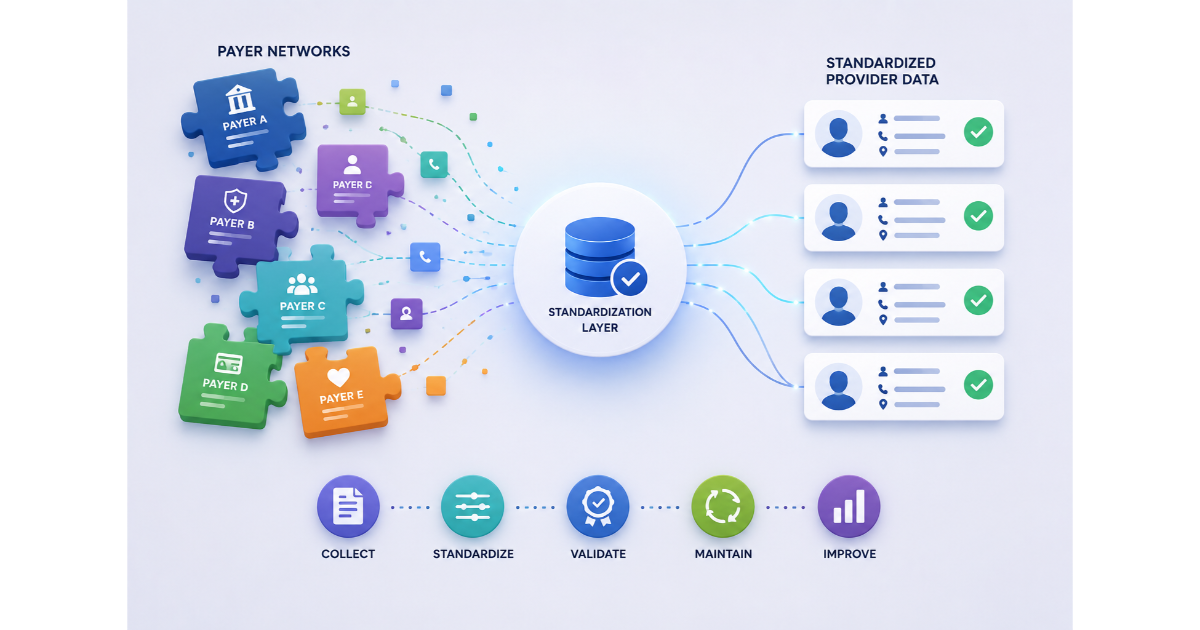

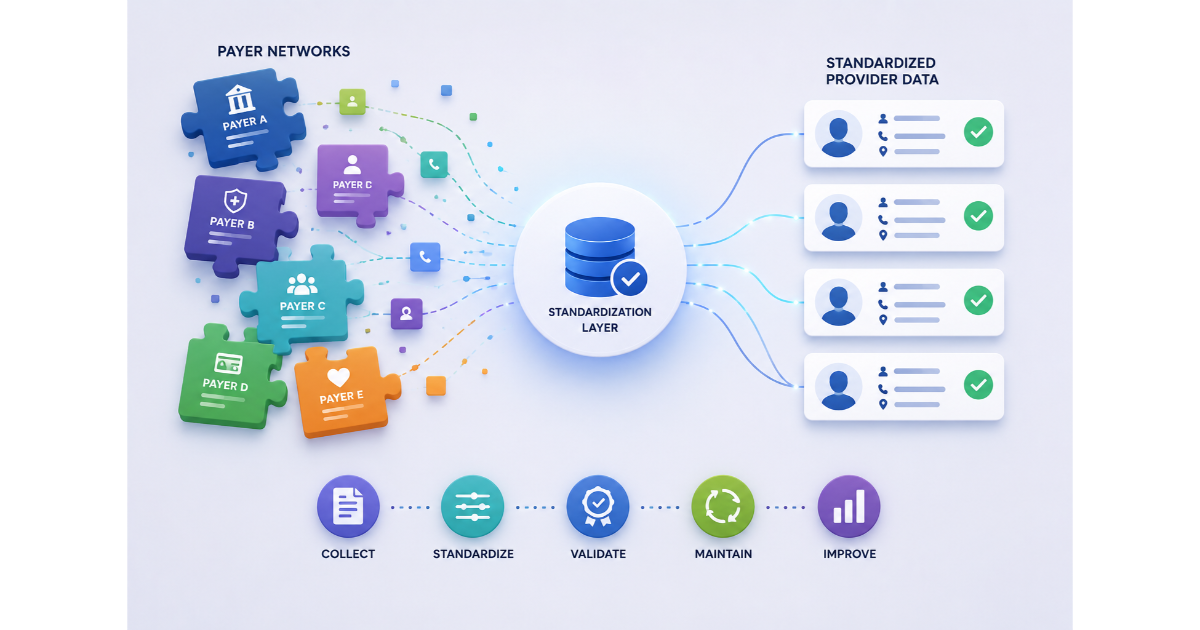

Optimize and secure provider data

Streamline provider-payer interactions

Verify real-time provider data

Verify provider data, ensure compliance

Create accurate, printable directories

Reduce patient wait times efficiently.

By submitting this form, I consent to Atlas Systems sending me marketing communications and processing my personal information in accordance with the privacy policy.

Our team will reach out to schedule your 30-minute demo shortly.

Driving innovation and success through thoughtful solutions and expert insights.